|

Some melding between the two seems to be something of significance for an AI. a tool that can be developed, structured, a new way to see the problem, a creative approach. The other good thing with randomness as well, find ways to see errors as being 'not errors', e.g. Randomness potentiates the space that you will both be able to identify error and consequently, find mistakes, find errors in reasoning (Monte Carlo simulations are traditionally used for this sort of thing). Apply randomness half the time, apply the knowledge you know the other half. I tend to be of the mindset that over the long term, you are going to get a good perspective if you approach the problem 50/50. boundaries, society and deciding if we apply randomness or not). I understand the other parts of the answer, but I can't really see what you try to say with them (e.g. It's also on individual levels: We're able to dynamically adapt our reinforcement system using meta-cognition (based in the prefrontal neocortex). The recursive feedback loop and the self-interference. That is what's so damn hard about shaping societies (and the market). > try to define something that both is a function of the system it exists in, and also something that could potentially break the whole system We don't give the computer words to express itself about this because we never taught it to do that. Computer produces right solution, algorithm survives. Computer produces wrong solution, algorithm dies. The problem is that a computer already has them. This goes back to how the context is defined, how society is defined, boundaries and reward functions established a priori.Įmotions can be simple. I do know that what looks random to one person is not necessarily random to another. But, creation, destruction, clearly an oversimplification. It seems very paradoxical, to try to define something that both is a function of the system it exists in, and also something that could potentially break the whole system. And I think it's important to question what things are worth applying random solutions to, and what things require deep contemplation. It makes us faster, but it's also us standing on the shoulders of giants. Boundaries are redefined when there is conflict, and the less violent and destructive the conflict, the better the chances are for these boundaries and reward functions to continue functioning as they have been developed (rather than being obliterated in entirety, requiring them to built from scratch). > I think we're faster when we find the right boundaries and reward functions instead of randomly trying stuff.īoundaries and reward functions in human society are part of the 'human social organism' that allows each individual human to function with autonomy in a fashion that is collaborative, aligned with our developed value systems, and allows us to live with stability, security, faith (be it in some sense of wonder, divinity, in each-other - doesn't matter), independence - and these things are base needs, no matter what variation they manifest with. With that definition I can't see how artificial life created would be any much different than the behavior of a computer virus. > If we look at nature as a massively parallelized computational system that defined "just live" as the reward (not because it "wants" it, but because it just emerged), it shows us how much power we would need if we would try to build a completely random process that gains intelligence. I think we're faster when we find the right boundaries and reward functions instead of randomly trying stuff.

But it takes a lot of time and energy, regardless of our computational power. I think your proposed method works ("just assemble random solutions and test them") because we've already seen it in nature. If we look at nature as a massively parallelized computational system that defined "just live" as the reward (not because it "wants" it, but because it just emerged), it shows us how much power we would need if we would try to build a completely random process that gains intelligence. But it's still a ton of computational power required. If we find a way to design reinforcement systems with the right goals, we can do it like mother nature did it for several billion years. Many of those emotions are pre-programmed. There's a lot of intelligence in our hormone-based incentive system - that we feel pain, that we feel sad, etc. The difficult part is developing the reward function.

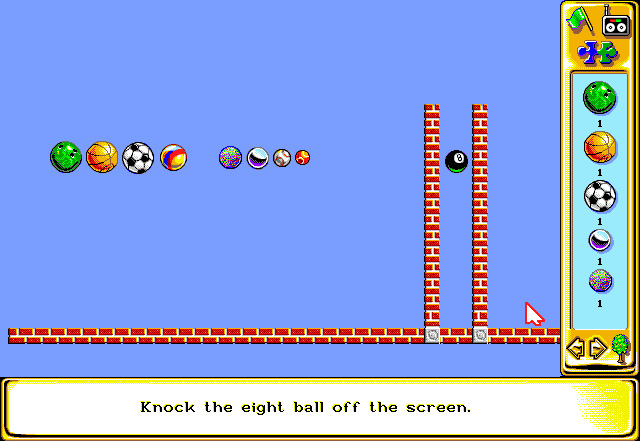

If you watch videos of AIs learning to run or playing Mario, you see that there's definitely "try random shit until your reward function gives you positive feedback" as a method for training AIs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed